Many of the pioneers who began developing artificial neural networks weren't sure how they actually worked - and we're no more certain today.

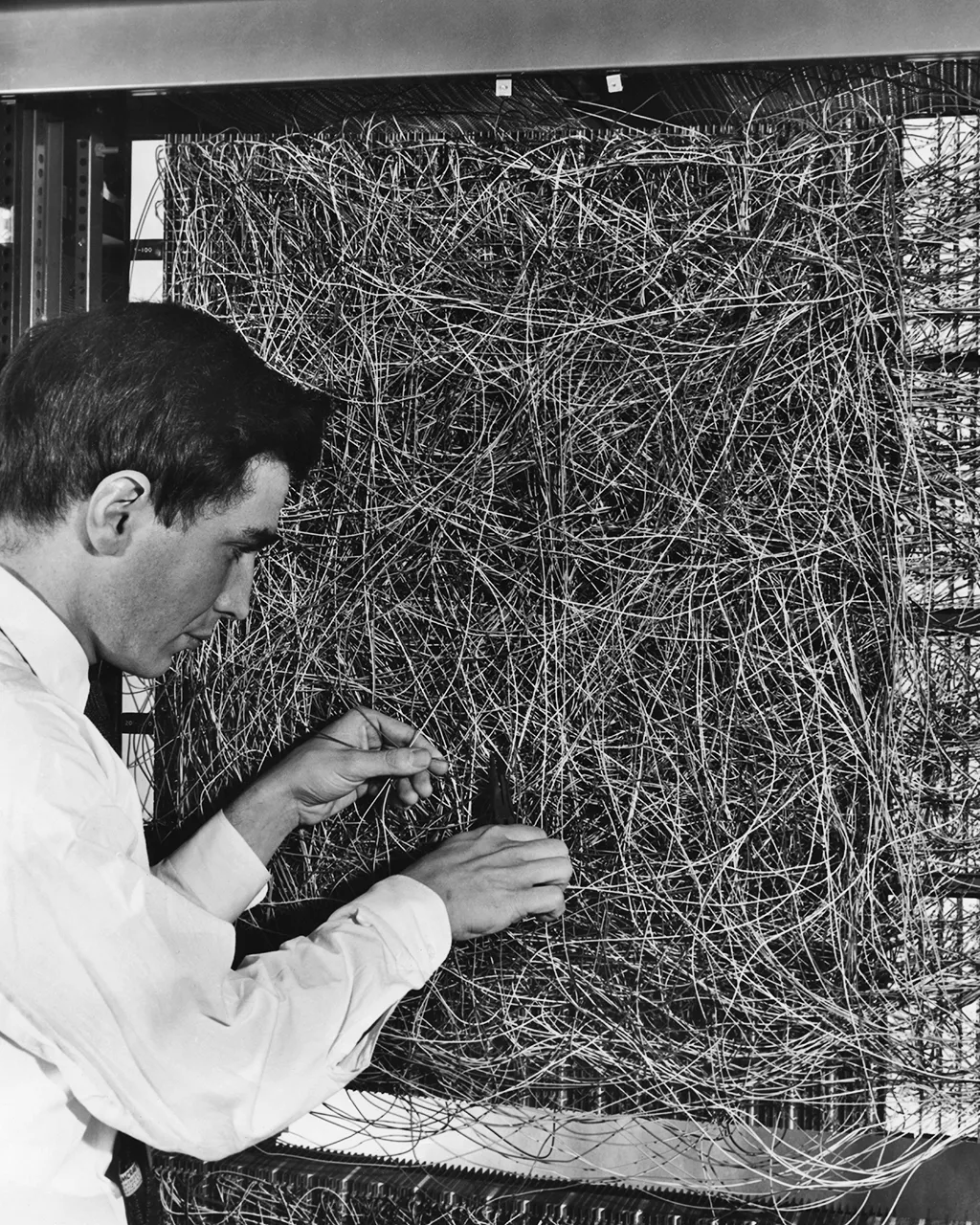

In 1956, during a year-long trip to London and in his early 20s, the mathematician and theoretical biologist Jack D Cowan visited Wilfred Taylor and his strange new "learning machine". On his arrival he was baffled by the "huge bank of apparatus" that confronted him. Cowan could only stand and watch "the machine doing its thing". The thing it appeared to be doing was performing an "associative memory scheme" – it seemed to be able to learn how to find connections and retrieve data.

It may have looked like clunky blocks of circuitry, soldered together by hand in a mass of wires and boxes, but what Cowan was witnessing was an early analogue form of a neural network – a precursor to the most advanced artificial intelligence of today, including the much discussed ChatGPT with its ability to generate written content in response to almost any command. ChatGPT's underlying technology is a neural network. (Read more about the AI emotions dreamed up by ChatGPT)

As Cowan and Taylor stood and watched the machine work, they had no idea exactly how it was managing to perform this task. The answer to Taylor's mystery machine brain can be found somewhere in its "analogue neurons", in the associations made by its machine memory and, most importantly, in the fact that its automated functioning couldn't really be fully explained. It would take decades for these systems to find their purpose and for that power to be unlocked.

The term neural network incorporates a wide range of systems, yet centrally, according to IBM, these "neural networks – also known as artificial neural networks (ANNs) or simulated neural networks (SNNs) – are a subset of machine learning and are at the heart of deep learning algorithms". Crucially, the term itself and their form and structure are "inspired by the human brain, mimicking the way that biological neurons signal to one another".

There may have been some residual doubt of their value in its initial stages, but as the years have passed AI fashions have swung firmly towards neural networks. They are now often understood to be the future of AI. They have big implications for us and for what it means to be human. We have heard echoes of these concerns recently with calls to pause new AI developments for a six-month period to ensure confidence in their implications.

It would certainly be a mistake to dismiss the neural network as being solely about glossy, eye-catching new gadgets. They are already well established in our lives. Some are powerful in their practicality. As far back as 1989, a team at AT&T Bell Laboratories used back-propagation techniques to train a system to recognise handwritten postal codes. The recent announcement by Microsoft that Bing searches will be powered by AI, making it your "copilot for the web", illustrates how the things we discover and how we understand them will increasingly be a product of this type of automation.

Drawing on vast data to find patterns, AI can similarly be trained to do things like image recognition at speed – resulting in them being incorporated into facial recognition, for instance. This ability to identify patterns has led to many other applications, such as predicting stock markets.

Neural networks are changing how we interpret and communicate too. Developed by the Google Brain Team, Google Translate is another prominent application of a neural network.

You wouldn't want to play Chess or Shogi with one either. Their grasp of rules and their recall of strategies and all recorded moves means that they are exceptionally good at games (although ChatGPT seems to struggle with Wordle. The systems that are troubling human Go players (Go is a notoriously tricky strategy board game) and Chess grandmasters, are made from neural networks.

But their reach goes far beyond these instances and continues to expand. A search of patents restricted only to mentions of the exact phrase "neural networks" produced 135,828 results at the time of writing. With this rapid and ongoing expansion, the chances of us being able to fully explain the influence of AI may become ever thinner. These are the questions I have been examining in my research and my new book on algorithmic thinking.

Mysterious layers of 'unknowability'

Looking back at the history of neural networks tells us something important about the automated decisions that define our present or those that will have a possibly more profound impact in the future. Their presence also tells us that we are likely to understand the decisions and impacts of AI even less over time. These systems are not simply black boxes, they are not just hidden bits of a system that can't be seen or understood.

There is a good chance that the greater the impact that artificial intelligence comes to have in our lives the less we will understand how or why

It is something different, something rooted in the aims and design of these systems themselves. There is a long-held pursuit of the unexplainable. The more opaque, the more authentic and advanced the system is thought to be. It is not just about the systems becoming more complex or the control of intellectual property limiting access (although these are part of it). It is instead to say that the ethos driving them has a particular and embedded interest in "unknowability". The mystery is even coded into the very form and discourse of the neural network. They come with deeply piled layers – hence the phrase deep learning – and within those depths are the even more mysterious sounding "hidden layers". The mysteries of these systems are deep below the surface.

There is a good chance that the greater the impact that artificial intelligence comes to have in our lives the less we will understand how or why.

Today there is a strong push for AI that is explainable. We want to know how it works and how it arrives at decisions and outcomes. The European Union is so concerned by the potentially "unacceptable risks" and even "dangerous" applications that it is currently advancing a new AI Act intended to set a global standard for "the development of secure, trustworthy and ethical artificial intelligence".

Those new laws will be based on a need for explainability, demanding that "for high-risk AI systems, the requirements of high-quality data, documentation and traceability, transparency, human oversight, accuracy and robustness, are strictly necessary to mitigate the risks to fundamental rights and safety posed by AI". This is not just about things like self-driving cars (although systems that ensure safety fall into the EU's category of high-risk AI), it is also a worry that systems will emerge in the future that will have implications for human rights.

This is part of wider calls for transparency in AI so that its activities can be checked, audited and assessed. Another example would be the Royal Society's policy briefing on explainable AI in which they point out that "policy debates across the world increasingly see calls for some form of AI explainability, as part of efforts to embed ethical principles into the design and deployment of AI-enabled systems".

But the story of neural networks tells us that we are likely to get further away from that objective in the future, rather than closer to it.

Inspired by the human brain

These neural networks may be complex systems, yet they have some core principles. Inspired by the human brain, they seek to copy or simulate forms of biological and human thinking. In terms of structure and design they are, as IBM also explains, comprised of "node layers, containing an input layer, one or more hidden layers, and an output layer". Within this, "each node, or artificial neuron, connects to another". Because they require inputs and information to create outputs, they "rely on training data to learn and improve their accuracy over time". These technical details matter but so too does the wish to model these systems on the complexities of the human brain.

Grasping the ambition behind these systems is vital in understanding what these technical details have come to mean in practice. In a 1993 interview, the neural network scientist Teuvo Kohonen concluded that a "self-organising" system "is my dream", operating "something like what our nervous system is doing instinctively". As an example, Kohonen pictured how a system that monitored and managed itself "could be used as a monitoring panel for any machine... in every airplane, jet plane, or every nuclear power station, or every car". This, he thought, would mean that in the future "you could see immediately what condition the system is in".

The overarching objective was to have a system capable of adapting to its surroundings. It would be instant and autonomous, operating in the style of the nervous system. That was the dream, to have systems that could handle themselves without the need for much human intervention. The complexities and unknowns of the brain, the nervous system and the real world would soon come to inform the development and design of neural networks.

Mimicking the brain – layer after layer

You may already have noticed that when discussing neural networks the image of the brain and the complexity this evokes are never far away. The human brain acted as a sort of template for these systems. In the early stages, in particular, the brain – still one of the great unknowns – became a model for how the neural network might function.

So, these experimental new systems were modelled on something whose functioning was itself largely unknown. The neurocomputing engineer Carver Mead has spoken revealingly of the conception of a "cognitive iceberg" that he had found particularly appealing. It is only the tip of the iceberg of consciousness of which we are aware and which is visible. The scale and form of the rest remains unknown below the surface.

In 1998, James Anderson, who had been working for some time on neural networks, noted that when it came to research on the brain "our major discovery seems to be an awareness that we really don't know what is going on".

A detailed account in the Financial Times in 2018, technology journalist Richard Waters noted how neural networks "are modelled on a theory about how the human brain operates, passing data through layers of artificial neurons until an identifiable pattern emerges". This creates a knock-on problem, Waters proposed, as "unlike the logic circuits employed in a traditional software program, there is no way of tracking this process to identify exactly why a computer comes up with a particular answer". Waters' conclusion is that these outcomes cannot be unpicked.

The application of this type of model of the brain, taking the data through many layers, means that the answer cannot readily be retraced. The multiple layering is a good part of the reason for this.

'Adaptation is the whole game'

Scientists like Mead and Kohonen wanted to create a system that could genuinely adapt to the world in which it found itself. It would respond to its conditions. Mead was clear that the value in neural networks was that they could facilitate this type of adaptation. At the time, and reflecting on this ambition, Mead added that producing adaptation "is the whole game". This adaptation is needed, he thought, "because of the nature of the real world", which he concluded is "too variable to do anything absolute".

As the layers of neural networks have piled higher their complexity has grown and has led to the growth of 'hidden layers' within these depths

This problem needed to be reckoned with especially as, he thought, this was something "the nervous system figured out a long time ago". Not only were these innovators working with an image of the brain and its unknowns, they were combining this with a vision of the "real world" and the uncertainties, unknowns and variability that this brings. The systems, Mead thought, needed to be able to respond and adapt to circumstances without instruction.

Around the same time in the 1990s, Stephen Grossberg – an expert in cognitive systems working across maths, psychology and bioemedical engineering – also argued that adaptation was going to be the important step in the longer term. Grossberg, as he worked away on neural network modelling, thought to himself that it is all "about how biological measurement and control systems are designed to adapt quickly and stably in real time to a rapidly fluctuating world". As we saw earlier with Kohonen's "dream" of a "self-organising" system, a notion of the "real world" becomes the context in which response and adaptation are being coded into these systems. How that real world is understood and imagined undoubtedly shapes how these systems are designed to adapt.

Hidden layers

As the layers multiplied, deep learning plumbed new depths. The neural network is trained using training data that, computer science writer Larry Hardesty explained, "is fed to the bottom layer – the input layer – and it passes through the succeeding layers, getting multiplied and added together in complex ways, until it finally arrives, radically transformed, at the output layer". The more layers, the greater the transformation and the greater the distance from input to output. The development of Graphics Processing Units (GPUs), in gaming for instance, Hardesty added, "enabled the one-layer networks of the 1960s and the two to three-layer networks of the 1980s to blossom into the 10, 15, or even 50-layer networks of today".

Neural networks are getting deeper. Indeed, it's this adding of layers, according to Hardesty, that is "what the 'deep' in 'deep learning' refers to". This matters, he proposes, because "currently, deep learning is responsible for the best-performing systems in almost every area of artificial intelligence research".

But the mystery gets deeper still. As the layers of neural networks have piled higher their complexity has grown. It has also led to the growth in what are referred to as "hidden layers" within these depths. The discussion of the optimum number of hidden layers in a neural network is ongoing. The media theorist Beatrice Fazi has written that "because of how a deep neural network operates, relying on hidden neural layers sandwiched between the first layer of neurons (the input layer) and the last layer (the output layer), deep-learning techniques are often opaque or illegible even to the programmers that originally set them up".

Although we understand how signals pass along neurons, we still don't have a clear idea of how the human brain works (Credit: Getty Images)

As the layers increase (including those hidden layers) they become even less explainable – even, as it turns out, again, to those creating them. Making a similar point, the prominent and interdisciplinary new media thinker Katherine Hayles also noted that there are limits to "how much we can know about the system, a result relevant to the 'hidden layer' in neural net and deep learning algorithms".

Pursuing the unexplainable

Taken together, these long developments are part of what the sociologist of technology Taina Bucher has called the "problematic of the unknown". Expanding his influential research on scientific knowledge into the field of AI, Harry Collins has pointed out that the objective with neural nets is that they may be produced by a human, initially at least, but "once written the program lives its own life, as it were; without huge effort, exactly how the program is working can remain mysterious". This has echoes of those long-held dreams of a self-organising system.

I'd add to this that the unknown and maybe even the unknowable have been pursued as a fundamental part of these systems from their earliest stages. There is a good chance that the greater the impact that artificial intelligence comes to have in our lives the less we will understand how or why.

But that doesn't sit well with many today. We want to know how AI works and how it arrives at the decisions and outcomes that impact us. As developments in AI continue to shape our knowledge and understanding of the world, what we discover, how we are treated, how we learn, consume and interact, this impulse to understand will grow. When it comes to explainable and transparent AI, the story of neural networks tells us that we are likely to get further away from that objective in the future, rather than closer to it.

* David Beer is professor of sociology at the University of York and is the author of The Tensions of Algorithmic Thinking: Automation, Intelligence and the Politics of Knowing

- Karlston

-

1

1

Recommended Comments

There are no comments to display.

Join the conversation

You can post now and register later. If you have an account, sign in now to post with your account.

Note: Your post will require moderator approval before it will be visible.